Why AI avatar tools are not ready for menopause education if they cannot show the women it affects most.

I am a Black, board-certified surgeon turned menopause physician and AI creative director.

I build my own masterclass campaigns. I write the scripts, design the slides, and use AI tools to help me record, edit, and repurpose the content. When I went into avatar tools like HeyGen, Kling, and Captions.ai to create video assets for a menopause masterclass, I expected at least one thing.

I expected to see women who look like me and my patients.

Midlife women. Women with visible age in their faces. Black and brown women in their fifties, not just one ambiguous “diverse” option you can count on one hand.

That is not what I found.

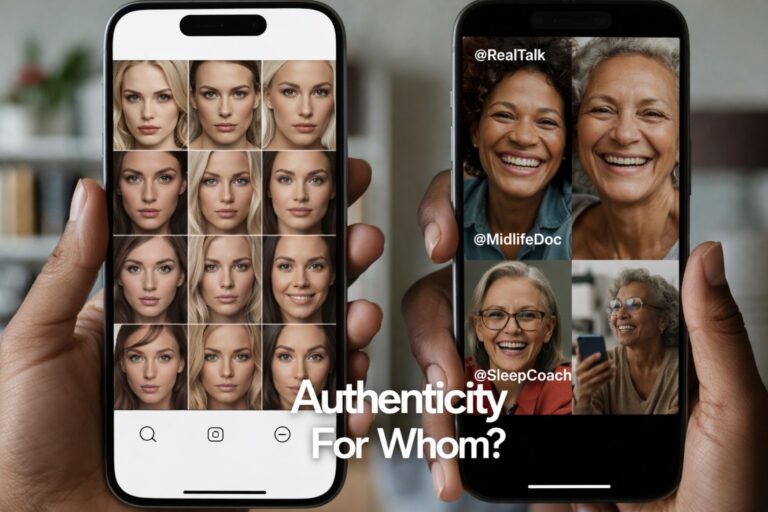

Instead, I found full libraries of young, mostly white or racially vague avatars, a single “Muslim woman” that is supposed to stand in for an entire global faith, and not one clearly midlife Black woman. The same pattern repeated when I went looking for voices that sounded like midlife Black women in my family and my clinic.

I can work around this because I know how to build my own likeness and direct my own visuals. Many people cannot. For them, the message is simple.

These tools will accept your subscription. Right now, they behave as if midlife Black women are not a default human to design for. That is not a feelings problem. It is a product design problem you can fix.

This is not cosmetic. It is clinical and cultural.

I am not using these tools to make funny videos or lip sync trends.

I am using them to explain what happens in perimenopause and menopause. To talk about night sweats, sleep disruption, weight changes, and nervous system shifts in a way that midlife women can actually receive. To build educational media that sits on real women’s phones and laptops.

When an AI avatar library only offers:

- young women in their twenties and thirties

- a few “diverse” faces that all feel like the same character in different wigs

- no clear representation of women 50 plus

the visuals tell a story before the script ever plays.

For a menopause campaign, that story is wrong.

It implies that menopause is a young woman’s issue. It quietly suggests that Black and brown women are an afterthought, if they register at all. It tells midlife women who have already been dismissed in exam rooms that they are now being edited out of the digital front door too.

In healthcare, trust starts long before a lab result or a prescription. It starts in the nervous system when a patient looks at a screen and thinks, “This is for people like me,” or, “This is for someone else.”

If my patient has to work to imagine herself into the avatar, the tool is not neutral. It is adding friction.

The DEI rollback and the quiet return of “default whiteness”

All of this is happening in a political moment where DEI programs are being dismantled, rebranded, or attacked outright.

We are hearing more messages like:

- “We are all just Americans. Why keep talking about race?”

- “Diversity initiatives mean unqualified people are getting jobs.”

- “We should not see color.”

At the same time, in AI tools, race and identity are very visible in who gets range and who gets scarcity.

Inside these platforms, you can scroll through:

- multiple white-presenting women in different ages, hair colors, and aesthetics

- polished male avatars in suits, hoodies, and startup uniforms

- then watch the variety collapse as soon as you look for older Black women, darker-skinned women, Indigenous features, or non-Western aesthetics

The product sees color very clearly when it is time to ship a default.

The only time race is treated as invisible is when accountability and design standards are on the table.

When I say Black women, I am not talking about a single shade. My own family stretches from alabaster to obsidian. Representation has to hold that full spectrum, not just one “acceptable” version of Blackness.

We are told to stop naming race and identity, while the tools themselves quietly keep centering one kind of body and one kind of life as the template. That is not an accident. It is a choice.

What I actually saw in HeyGen, Kling, and Captions.ai

On January 1, 2026, while building menopause masterclass assets, here is what my experience looked like.

I opened HeyGen and scanned their avatar options. I saw:

- multiple professional looking white and racially ambiguous women

- a few younger Black and brown presenting options

- one visibly Muslim woman who could not possibly represent the full spectrum of Muslim identities

- no clearly midlife Black woman in the 50 plus range

When I moved into voice libraries, the same pattern held. I did not find a voice that sounded like my aunties, my cousins, or the midlife Black women who sit in my consults. The options felt either generic, overly polished, or tuned to a younger, whiter ear.

In Kling, as I worked through assets for my masterclass campaign, I saw a similar absence. Plenty of templates that would work for generic startup content. Nothing that made me think, “Yes, that looks like the kind of woman who would be talking honestly about hot flashes, memory changes, and sleep in a way my patients would instantly trust.”

In Captions.ai, which I like for some of its editing features, the AI avatar layer falls into the same narrow lane. Slim, smooth faces. Light to moderate aging at best. “Diversity” in the marketing language, but a very thin band of what diversity actually means once you start clicking.

None of these tools presented midlife Black women as a basic, everyday part of the human palette.

When you market a tool as “for everyone,” and this is what a Black midlife physician sees when she goes looking for herself and her patients, that is a product failure.

Why this matters for people who are not AI experts

I have the benefit of being a physician, artist, and early adopter of AI.

I know how to:

- create a digital twin that looks like me

- direct color, framing, and styling

- reject outputs that trigger aesthetic trauma or feel medically implausible

Most midlife women and many clinicians do not have that combination of skills, time, or interest. They will reach for the preset avatar, the default option, the model the tool tells them is ready to go.

If all the defaults look young, thin, and mostly white, then several things happen.

- Midlife women who are not thin and white get the message that these tools are not for them.

- Clinicians who want to reach Black and brown patients are forced to choose between misrepresentation or no representation.

- The same women who live at the intersection of medical bias, dismissal, and poorer outcomes are once again asked to see themselves in someone else’s skin.

We tell people AI is here to “democratize content creation.” Democracy without representation becomes another word for access to someone else’s template.

This is not about shaming white women. It is about fixing the palette.

This is not an argument against white women having avatars, being proud of their blue eyes and blond hair, or appearing in campaigns.

The problem is not that white women are present. The problem is that everyone else is conditional.

When an AI tool’s library is robust for one group and skeletal for others, it silently teaches who is allowed to be ordinary. White women get age range, lifestyle range, aesthetic range. Black women and other women of color get a few slots to cover an entire spectrum of culture, faith, and body types.

That is not diversity. That is rationed visibility.

If we are serious about saying, “We are all Americans,” then our tools should reflect all of America. Not just at the level of a banner on a landing page, but in the everyday defaults that real people have to choose from when they sit down to make a slide deck, a class, or a patient video.

What representation ready looks like for AI avatar tools

If you want your AI product to be used in healthcare, education, and women’s health campaigns, this is the minimum standard.

A representation ready avatar and voice library should:

If you want your AI product to be used in healthcare, education, and women’s health campaigns, this is the minimum standard.

A representation ready avatar and voice library should:

1. Include midlife and older adults, not just the under 40 crowd.

Menopause, cancer, heart disease, and caregiving conversations are not being led by 23 year old.

2. Offer multiple midlife Black women and women of color, not a single token option.

Different skin tones, hairstyles, face shapes, and roles. Not just “the one Black woman” in the grid.

3. Provide voice libraries that sound like real communities.

That includes midlife Black women, Latinas, Indigenous women, and others with the cadence and warmth of real life, not caricature.

4. Be transparent about how these models are chosen and updated.

Tell us who is in the room when these faces and voices are selected, and how you will respond when users point out gaps.

5. Invite co design with the people most affected.

Black women physicians. Muslim women across regions. Disabled and neuroaffirming communities. Not after launch as a PR patch, but at the beginning as partners.

This is not “extra” when you are selling into healthcare and wellness. It is part of product safety.

What creators and clinicians can do right now

While vendors catch up, there are small moves you can make today.

Ask your vendors clear questions

- “How many midlife avatars of color do you have?”

- “What is your roadmap for adding more?”

- “Who is on your advisory board for representation?”

Document what you see

- Take screenshots of the libraries as they are now.

- Keep dates.

- Notice what changes and what does not.

Use your own likeness whenever possible for health content

Patients are often more comforted by seeing their actual clinician than by a generic stand in.

Partner with studios that prioritize representation integrity

At Ceyise Studios, my focus is on AI guided creative direction for women’s health and menopause communication that feels clinically plausible and culturally grounded.

You do not have to accept thin, biased palettes as the only option. You can ask for more.

This is not optional polish. It is product safety.

AI and machine learning are not toys in this context. They are part of how people will learn about their bodies, their hormones, their risks, and their choices.

If the visual and voice layers of these tools erase the very women who have already borne the brunt of medical harm, then those products are not ready for healthcare. They may be entertaining. They may be efficient. They are not safe in the deeper sense of the word.

Midlife Black women are patients. We are clinicians. We are buyers. We are founders. We are the ones subscribing to your platforms and using them to educate real humans. If your AI avatar library cannot show us, your product is not finished.