In my last two posts, I walked through what happens inside AI tools.

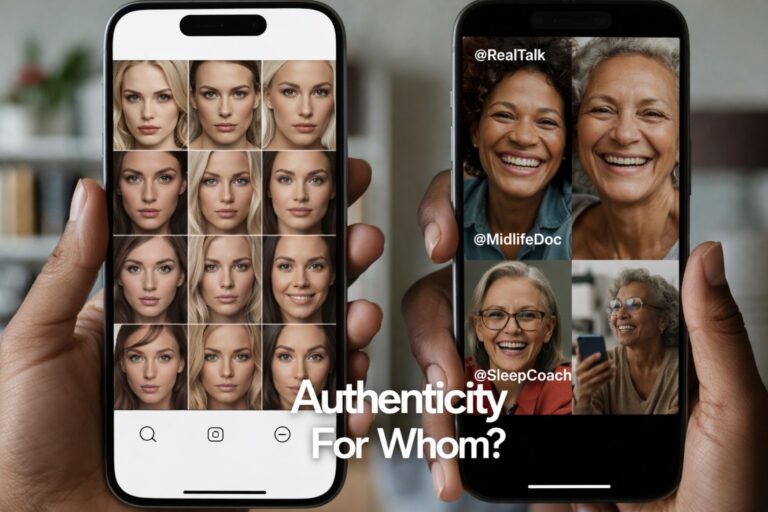

In Paid, But Not Portrayed, I documented what I saw when I went looking for midlife Black women in avatar tools like HeyGen, Kling, and Captions.ai. Full libraries of young, mostly white or racially vague faces. Not one clearly midlife Black woman ready to teach a menopause masterclass.

In the follow-up, I offered a representation-ready checklist for any AI tool that wants to be used in healthcare and women’s health communication. Age range. Skin tone range. Voice range. Co-design with the people most affected.

Those pieces looked at the tool layer.

This one looks at the platform layer.

Because even if we fix the palettes inside our AI apps, we still have to ask a harder question:

When Instagram talks about “authenticity” in an AI world, who is it actually prepared to see?

When “Authenticity” Becomes a Product Feature

On New Year’s Day, I scrolled into this Instagram carousel from Adam Mosseri, the head of Instagram, about creators, AI, and authenticity.

He wrote that:

- authenticity is becoming “infinitely reproducible”

- AI “slop” has a certain slick, over-smoothed look

- camera companies are betting on the wrong aesthetic

- the future of trust will depend less on what we see and more on who we believe

I agree with him on one point.

We are moving into an era where almost any visual can be generated, remixed, or faked. Authenticity will not be obvious at a glance. Skepticism is a necessary nervous system skill.

Where I differ is in what counts as the problem.

Mosseri’s framing treats authenticity as a mostly technical challenge.

- Can we label AI content?

- Can we fingerprint “real” media?

- Can creators stand out in a flood of synthetic polish?

Those are important questions. But for midlife Black women trying to navigate menopause, medical gaslighting, and AI at the same time, they are not the only questions.

We are also asking:

- Authentic for whom?

- Whose faces and bodies are Instagram prepared to show as ordinary?

How do AI tools and platforms work together to make some women hyper-visible and others almost invisible?

The Missing Layer in the Authenticity Conversation

I am a Black woman, a board-certified surgeon turned menopause physician and AI creative director. I spend my days thinking about nervous systems, hormones, color, and how midlife women actually metabolize information on a screen.

When I hear a platform talk about authenticity, I do not just hear a design problem. I hear a trust problem.

Because in my clinic and my creative studio, authenticity is not a mood. It is a physiologic state.

- A woman’s shoulders drop when she sees a body that looks like hers on the screen.

- Her breathing slows when she hears a voice that sounds like someone she grew up with.

- Her brain is more likely to encode and recall health information if the messenger feels familiar and safe.

Authenticity is not just “raw” or “unfiltered.” It is the moment when the nervous system says, This is probably safe enough to listen to.

If midlife Black women rarely see themselves in AI-generated avatars, in menopause ads, or in the creator thumbnails Instagram chooses to amplify, then all the authenticity talk floats above a basic missing piece:

A feed cannot feel authentic to people who are not actually in it.

What Blogs 1 and 2 Revealed that Mosseri’s Carousel did not name

From the first two posts in this series, we already know three things.

- AI tools still treat midlife Black women as edge cases.

In Paid, But Not Portrayed, the avatar libraries marketed as “for everyone” failed a simple test: show me a 52-year-old Black woman I would trust to explain hot flashes to my auntie. - Representation is part of product safety, not optional polish.

In the representation-ready checklist, we named age, race, body type, and voice as core to whether a tool is safe to use in women’s health communication, especially for groups who already live with medical bias. - Most clinicians and patients will use defaults.

They will not build custom avatars or fine-tune color grading. They will choose whatever the tool labels as “standard,” “professional,” or “recommended.”

Now put those three realities next to Mosseri’s carousel.

When he says authenticity will become “infinitely reproducible,” we have to ask:

- Reproducible using whose data?

- Reproducible in which faces and bodies?

- Who gets to be the visual shorthand for “real,” “relatable,” or “trustworthy” in an AI-heavy feed?

If the underlying tools are already skewed toward young, thin, white, or racially ambiguous women, then the platform’s authenticity layer will be skewed in the same direction.

You cannot sprinkle authenticity logic on top of biased inputs and expect justice to fall out.

AI Slop, Aesthetic Trauma, and Who Gets to be “Raw”

Mosseri mentions “AI slop” as a category. He describes it as too slick, too smooth, too perfect.

He is right that many AI images have a particular sheen. I call this aesthetic sameness. In healthcare, it can tip into aesthetic trauma when bodies are rendered in ways that erase age, disability, and cultural specificity.

But here is the deeper tension:

- When the feed is full of polished white and racially vague faces, we call it “slop.”

- When Black women start showing up in unfiltered, non-smoothed images, we often call it “unprofessional.”

For my late-diagnosed autistic, sensory-sensitive menopause patients, the nervous system reads that double standard.

These women see brands celebrating “raw” authenticity when it looks like a 27-year-old white woman in smudged eyeliner and a messy bun. She does not see the same energy for a 52-year-old Black woman speaking about night sweats in her satin bonnet.

So when platforms talk about rewarding “unproduced, unflattering images,” I want to know:

- Whose imperfections are we prepared to find charming?

- Whose reality will still be quietly shadow-banned as “too much,” “too political,” or “off-brand”?

This is where representation integrity meets algorithmic power.

Fingerprinting “Real” Media is not enough

In the latter half of the carousel, Mosseri talks about:

- camera manufacturers cryptographically signing images

- platforms fingerprinting real media

- surfacing more context so people know who is behind an account

Those are good, necessary steps. Deepfakes are not abstract for Black women. We have seen how quickly our images and voices can be misused.

But fingerprinting “real” media does not solve the distribution problem.

If Instagram can prove a video is “real,” but the underlying recommendation system is still more comfortable showing:

- younger faces

- thinner bodies

- lighter skin

- creators who do not talk about race, disability, or menopause too directly

Then authenticity becomes another filter, not a safeguard.

It becomes a brand aesthetic about sincerity, rather than a structural commitment to showing people who have historically been left out.

What an Authenticity-Ready Platform Would Consider

If Instagram and other platforms want to hold this moment with care, the question is not only “How do we detect AI?”

It is also:

How do we ensure that when authenticity becomes a metric, midlife women of color are not collateral damage?

An authenticity-ready platform would:

- Audit who shows up when AI aesthetic tools are used.

Run internal tests that mirror the ones I ran in Paid, But Not Portrayed. Who appears when teams use avatar tools, auto-caption tools, or AI B-roll tools on creator content about health and aging? - Interrogate what “quality” means inside ranking systems.

If “high quality” quietly means “bright, thin, young, and light,” then older, darker, or disabled creators will always have to work harder for reach. - Invest in representation as infrastructure, not campaign.

This means funding co-design with midlife women of color, clinicians, and disabled creators to understand how authenticity feels at the nervous system level, not just in brand decks. - Treat health and identity content as high-stakes, not niche.

Menopause, mental health, and chronic illness are not fringe topics. They are central to how women use the internet to stay alive and functional. - Be transparent about what they are learning.

If you discover that midlife Black women’s content is under-recommended, say so. Share the plan and the timeline to do better.

This is the level of honesty and specificity that would make a carousel about AI and authenticity land as more than a think piece.

What Creators and Clinicians like me will be Watching

As a menopause physician and founder of Ceyise Studios, I am not sitting in a corner hating AI or scrolling in secret resentment.

I am doing three things:

- Using AI where it actually helps my patients and my schedule

- Documenting where it fails my communities, in public and with receipts

- Designing alternatives that move us closer to representation integrity, not further away

For midlife Black women building businesses, families, and second lives inside these platforms, authenticity is not a trend. It is a survival need.

We do not have the luxury of pretending feeds are neutral.

So yes, Adam, authenticity will matter more.

And from where I sit, that means:

- not only labeling AI content, but asking why so much of it still looks the same

- not only rewarding “raw” aesthetics, but expanding who is allowed to be raw without penalty

- not only fingerprinting real media, but making sure the women most harmed by past erasures are fully in the frame

Until that happens, we will keep doing what we have always done.

We build in the gaps. We speak up in the comments. We design for the women the algorithm still cannot see without help.

And we remember that authenticity is not just what a piece of content looks like. It is how it lands in the body of the person who needs it most.